I’m starting a delayed series of blog posts to report on my trip to the 2012 Agile Coach Camp Canada that took place in Ottawa at the end of June. In each post, I plan to cover one interesting topic or session that took place there. I want to start with Agile in Professional Services.

This session was led by Richard Gore and resulted in a lively discussion. Insights came from several different directions and I may not even remember all of them anymore.

Lean Economic Models and Real Options

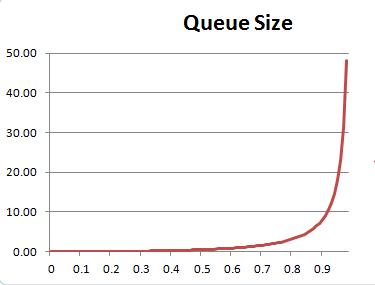

Professional services projects are especially susceptible to large transfer batches: user stories, features, smaller projects making up larger projects accumulate, then there is late integration, followed by delivery to the customer. Both customers and vendors of such projects need a serious conversation about batch sizes! “We want you to deliver this whole thing to us at the end” only reveals ignorance. “We will demo and push it every two weeks” isn’t much better. I know my audience may be getting tired of this recommendation, but Donald Reinertsen’s The Principles of Product Development Flow is a must-read. Batch sizes affect economic outcomes, so start learning about them.

One particular kind of batch transfer deserves special attention. It is the one near the end of the project Vendor’s part of the value stream, for example, from ready-to-deploy to deployed. It is a very recent insight, presented at the LSSC’12 by the German duo of Arne Roock and Markus Andrezak: avoiding a large batch transfer at that part of the value stream increases the value of the options upstream! (Links to the video of their presentation and the conference proceedings where you can find their paper). On the flip side, doing the opposite increases the project risk. Both the Vendor and the Customer are exposed to it and neither should ignore it.

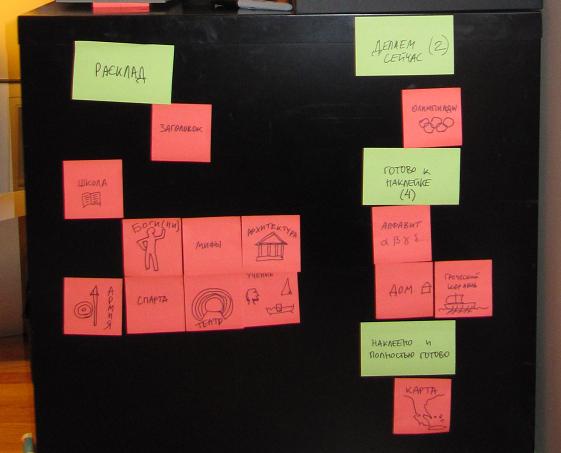

Professional services projects are also susceptible to another combination of anti-patterns: converting options to obligations at the start, followed by backlog-driven development. Backlog-driven development is economically sub-optimal as demonstrated by Mike Burrows in his LSSC’12 talk (and here Reinertsen’s economic models of flow come in handy again!), but there is more. Once you’ve exercised all your options, you’re out of options and you need to replenish them. If your option-replenishment process isn’t working, it’s a serious business problem and improving the development process cannot solve it.

Design the Engagement, Not the Solution

This six-word gem uttered by Andrew Annett (and the six words included two articles) opened the door to applying our improved understanding of project economics to practical situations. The conversation between the Vendor and the Customer on how they approach building their relationship comes before the conversation about managing their project.

The two sides “should talk from the positions of equality”, “neither side should have the upper hand” and then it becomes possible for the Vendor and the Customer to have a conversation about optimal economic parameters and reducing project risk. If the Vendor is more Agile-inclined, selling Agile to the Customer as “Agile” may be counter-productive. Better present a choice between two options: low-risk and high-risk.

In the real world, the two sides are quite often not on the equal level. One of them is much more powerful and can push the other side around and shift the burden of underwriting the shared risk to them. This is an abusive relationship. Recognize it when you see it, know that that is the problem, and don’t expect Agile to solve it.

A short summary of this session could probably fit in one tweet: design the engagement, not the solution; neither side has the upper hand; lean economic models; real options.